Heritage committee recommends copyright protections for content scooped up by bots

By Ahmad Hathout

Innovation, Science and Economic Development (ISED) inquired about what an “opt-out” mechanism would look like for AI-generated news summaries on platforms such as Google, according to a document obtained by Cartt, before the standing committee on Canadian Heritage recommended copyright protections for news content scooped up by bots.

The department, which has been touting Canada’s commitment to AI research and development, had prepared a note ahead of a meeting last fall with News Media Canada, the association for Canadian media that has been sounding the alarm on the claimed negative impact American tech platforms have been having on Canadian news businesses.

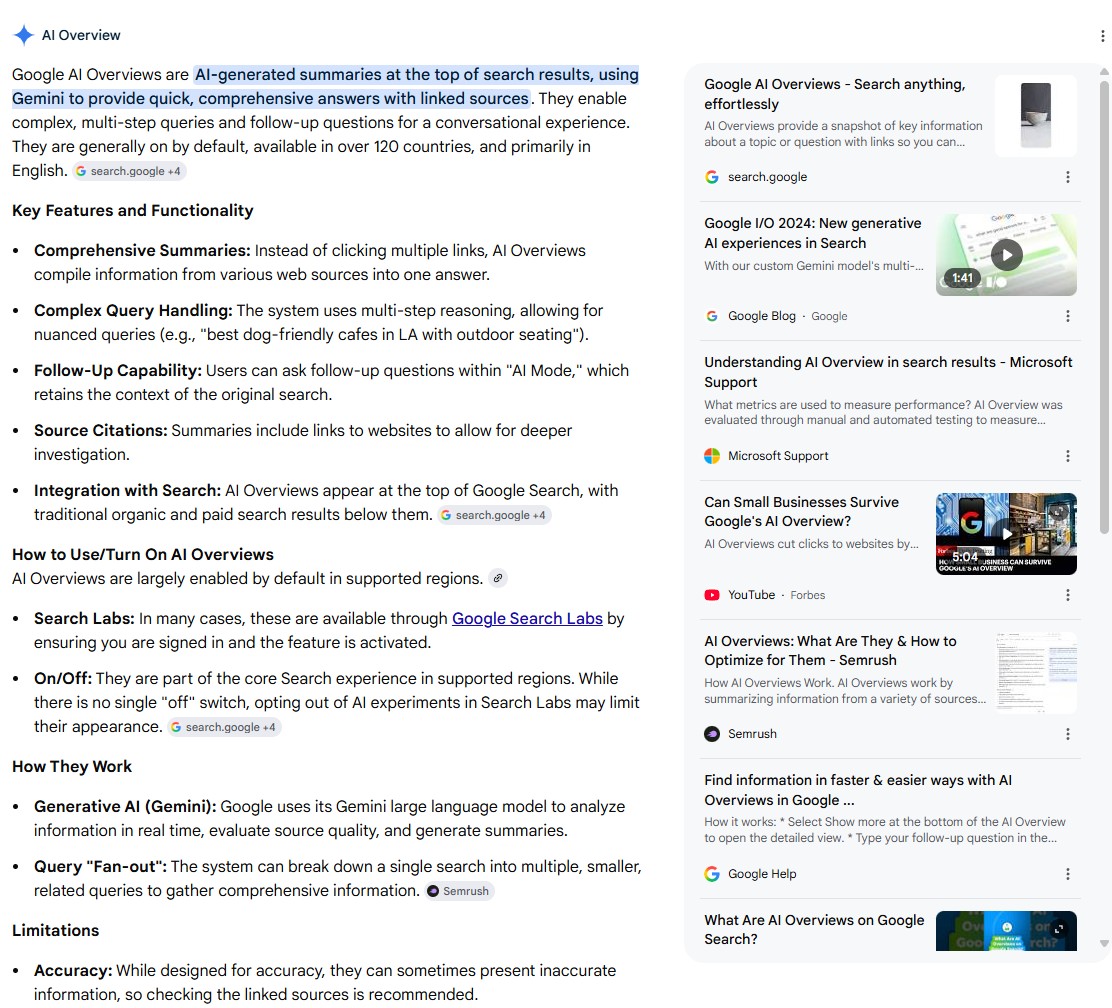

One talking point, according to the note, was the problem with AI-generated news summaries, such as on Google’s AI Overviews, which launched in 2024. News Media Canada and others in the news industry have for months said these summaries are driving traffic away from their websites, which often operate business models that require clicks. While AI Overviews provides the sources to the right of the summaries, Canadians are not necessarily clicking through, and there’s no way to opt-out of them — at least not without paying a steep price, allege people in the industry.

“What would an effective, fair opt-out mechanism from AI overviews look like, and how can government ensure platforms do not retaliate against publishers that choose this option?” read one suggested question by ISED.

“If publishers block Google’s AI crawler, they find themselves de-indexed or unable to attract traffic to their sites via search,” Paul Deegan, president and CEO of News Media Canada, told Cartt, which inquired about the substance of the meeting. “We believe the Minister of Industry should ask the Competition Bureau to conduct a study into the state of competition with respect to search and AI. It is in the public interest to have Googlebot split into separate crawlers – one for AI and one for search. That would be a pro-competitive safeguard to help level the playing field between publishers and Google.”

Google, which did not respond to a request for comment, agreed to deliver $100 million annually to the Canadian Journalism Collective for the luxury of hosting Canadian news on its platforms. The deal was made under the Online News Act (Bill C-18), a 2023 law that created a mandatory bargaining framework for platforms to compensate news publishers for hosting their work. The money had started trickling down to news businesses last year.

But, according to Rudyard Griffiths, there are strings attached.

The publisher of news and commentary website The Hub claimed news organizations have to serve up their content to feed the world’s largest search engine’s bots – even when that content is behind a paywall.

“A condition of receiving funds from the Journalism Collective, which was established to disburse the funds, is that you have to make all the content you produce available to Google, to one specific AI company, one specific LLM [large language model] and arguably to the most powerful one in the marketplace: the originator of AI Overviews within your Chrome browser and the creator of Google AI Mode, which is something that the company is clearly investing in and that it is rolling out across browsers in Canada and around the world,” Griffiths told the Canadian Heritage committee studying the impact of AI on the creative industries in October.

“If you choose to block its bots that are scraping your content for its AI LLM, it will block you from all search results,” he claimed.

That testimony helped inform a Canadian Heritage committee report, released April 14, that recommends the federal government protect copyrighted work in accordance with the “ART principle” — authorization, remuneration and transparency — such as by ensuring “clear opt-in consent requirement for the use of copyrighted works in the training of artificial intelligence systems, ensuring that creators’ works may not be used for text and data mining [TDM] or model development without their prior authorization.”

The committee recommended that the government ensure the scope of the Copyright Act applies to AI-generated content to guarantee copyright protection and mandate greater transparency from AI developers regarding copyrighted works used to train their models, including disclosure of training data sources, to enable proper authorization and licensing.

Culture Minister Marc Miller was aware of the issue before the report. When asked whether the use of copyrighted materials for AI training violates copyright law, he said, “The current copyright law does and should protect those that have created material and people need to be compensated properly,” according to a report from The Canadian Press.

That, to Deegan, is a positive position in light of a report released by ISED last year that highlighted views from tech platforms which wanted a clarification in copyright law to allow for a TDM exception.

“Some stakeholders expressed support for an infringement exception for the use of copyright-protected works in TDM and AI training,” read the report, which followed the department’s consultation on “Copyright in the Age of Generative Artificial Intelligence.”

“Technology industry stakeholders generally supported a TDM exception, be it a new standalone exception or a broadening of an existing general exception, such as fair dealing, to address relevant uses,” the report added.

But creators oppose the use of their content in AI without consent and compensation, according to the report.

“Individual creators and the cultural industries argued that, as the generative AI market develops, rights holders must be able to consent to the use of their content in the training of AI and should receive credit and compensation for these uses,” the report said. “They also expressed the view that there is no existing exception, nor should there be an exception, covering the use of copyright-protected works for TDM purposes. Some asked for clarifications to this effect in the copyright framework.”

An ISED spokesperson told Cartt that, “The government recognizes creators are concerned about the training of artificial intelligence (AI) models, and the impact of content generated by AI … The government is committed to ensuring that its policies remain responsive to the modern needs of creators, rightsholders and consumers, while reflecting the realities of today’s digital world.”

Deegan said there should not be an amendment to copyright law to include a TDM exception for what he called “the theft of our IP … happening on an industrial scale” with “many culprits” who, according to one recent report, have issues with attribution.

McGill University’s Centre for Media, Technology and Democracy – which examines the information sphere broadly, including how Canadians get their news – conducted studies to analyze how these AI models interact with information and what they spit out.

The centre ran two studies in February and March 2026: one that tested the four major AI models – ChatGPT, Gemini, Claude, and Grok – on 2,267 real Canadian news stories in both official languages for a total of a little more than 18,000 queries to measure what the models absorbed and whether they attributed it. A second study asked the same models about 140 specific recent articles from seven Canadian outlets across 3,360 experimental conditions to determine whether they produced viable substitutes for current journalism and whether they credited the source.

The four models provided no source attribution 82 per cent of the time and covered enough of the original reporting in the second study to substitute for the source in 54 to 81 per cent of cases, the AI News Audit report said. “Models linked to Canadian news sites in 29 to 69% of responses, but named the originating outlet in the response text in only 1 to 16% of cases,” it said. “When we named the outlet and asked the same models for citations, attribution rates reached 74–97%.”

“There is no compensation obligation, no attribution requirement, and no transparency about what has been ingested,” the AI News Audit report says.

Besides raising policy questions about attribution and licensing, the report concludes that the statutory review of the Copyright Act will need to address AI training directly, including the question of “whether large-scale commercial ingestion of copyrighted journalism falls within the doctrine’s enumerated purposes”; and address whether the Online News Act “can or should be extended to encompass AI companies that synthesize and distribute journalism, and if so, what obligation structure is appropriate for a form of intermediation that differs fundamentally from social media.”

“It seems to me that, effectively, the behavior that we documented in the report is de facto that, actually, platforms are in a more direct way than social media platforms providing access to the news,” Aengus Bridgman, director of the Media Ecosystem Observatory at the centre and one of the two authors of the AI News Audit report, told Cartt in an interview. “There’s also just the question of how visible that linking through is and what [are] the clickthrough rates in conversations with publishers,” he said. “My sense is that the clickthrough rate is even worse on that then what it has been on social.”

As for the question of whether or not the Online News Act already applies to these bots, the CRTC told Cartt that the law “defines making news available broadly to include the reproduction of news content, in whole or in part, or facilitating access to it by any means, including indexing, aggregation, or ranking.”

In fact, “the product that is being offered by the AI companies is a very effective substitute,” Bridgman told us, citing the report’s findings. “You ask a question about a recent news story and you get, often, very similar facts, but often a good enough replacement for the original news story, and so why would you click, right? Why would you go through? Why would you look at the source? You’ve been provided that information.”

Griffiths told the Heritage committee that while AI has allowed investigative journalists to synthesize large volumes of information, AI summaries have significantly reduced referral traffic from internet search engines, with studies suggesting that “upwards of 60% of Google searches are now zero click.”

While Google agreed to put money into news, the other half of the two digital ad giants, Meta, has not played ball. The parent company of Facebook opposes the Online News Act and has refused to come to bargaining table. Instead, it has said it pulled Canadian news from its platforms, despite claims it hasn’t entirely done so.

If there is resistance, Deegan, who said his organization fully supports Canada’s pursuit of AI leadership, said the federal government can use its procurement arm as leverage.

“Procurement is one of the strongest tools in the Government’s policy arsenal,” he said. “Public Services and Procurement Canada and Treasury Board should work together to ensure those on the government’s list of interested artificial intelligence suppliers sign a ‘supplier commitment’ that includes a commitment to the principles of transparency, consent, and attribution with respect to all copyright-protected source content.”

It’s a twice-told tale: when Canadian regulation touches American technology, there is resistance. But Bridgman said there is also an opportunity for collaboration. “What we really kind of focus on is about figuring out a way to work in this new area, in this new arena, not in an opt-out way, but in a, ‘okay, we’re all here at the same table, maybe we don’t want to be, but Canadians are likely to continue to get their news and probably increase their news consumption via agent or summary search and so, given that, how are we going to ensure that an already struggling journalism industry doesn’t completely collapse to the overall deficit of democracy.”

And it’s not just Canadian journalism that appears to be captured by this sentiment. The European Commission announced late last year that it opened an antitrust inquiry into whether Google scraped data from websites without providing appropriate compensation or an opt-out. This came after an alliance of NGOs, associations and organizations from the media and digital industry filed a complaint against the AI tool for alleged violations of the Digital Services Act, which was followed by the Italian federation of newspaper publishers filing a similar complaint to the country’s communications regulator late last year, calling for action against what it called the “traffic killer.”

“News outlets are providing something that the AI platforms value enormously, and we know they value it enormously because this is what they provide to people who visit and who pay for their service and who ask questions,” Bridgman said. “They surface the news content consistently and they’re doing so because they know that it’s high-quality content, and they know that, ultimately, people want to be informed and don’t want to be lied to and don’t want to be misled.”

Photo of Aengus Bridgman, via McGill University’s Centre for Media, Technology and Democracy